We are midway through the 5th month of Gregorian 2026, and the steady stream of events in the Large Language Model (LLM)/ Generative AI (GenAI) space since the start of the year has been relentless; disrupting many established industries and professions. At the end of January, Claude launched a workflow capability that disrupted the market. Legal firms, IT services, and BPO stocks didn’t just dip; they rebased. In the following weeks, Anthropic expanded into deeper legacy‑code tooling, and, predictably, several traditional vendors felt the tremors. Whether you call it Co‑work, Agentic Fleets, or wonder What to Name It?, a nod to Maestro Ilaiyaraja there, GenAI and agents by GenAI are here to stay and add value. March and April shaped the myth of Mythos* in real time. Antigravity updates, Stitch, Opus 4.7, All in all, eventful 4 months.

Throughout this, the core has remained the same: our job is to conceptualize, build, deploy, polish, and find ever-expanding use cases, adding value through the time saved. We’ve moved past the “cool demo” era and into the age of agentic workflows, systems that don’t just suggest code but remove the mundane, repetitive layers of cognitive labor. The “warm‑blooded” requirement for low‑level logic is, for the first time, being audited directly by the bottom line.

This is no longer just about a chat interface or getting clever completion in your Integrated Development Environment (IDE). We have crossed into a new command structure: you, the Agent Orchestrator, describe the What; the Agent – built of vectors, Graphics Processing Units (GPUs), High Bandwidth Memory (HBM), embeddings, self‑attention, and other mechanisms, esoteric or otherwise – executes the How at scale.

Coding Agents Are Not Magic: A 2026 Strategic POV

The 2026 Reality: From Chatbot to Workforce

In 2023, AI Assistants lived in sidebars. By 2026, that paradigm is becoming obsolete, if not already. We are now deploying Autonomous Coding Agents – systems like Google Antigravity, Claude Code, OpenAI Codex, Continue.dev, and Devin – that operate at the repository level rather than the line-by-line.

The Anatomy of an Agent

Managing an agentic workforce requires understanding the machinery that creates the “illusion” of agency. Every agent is a composite of four layers:

The Policy Engine (The Brain)

State‑of‑the‑art models—Gemini 3, GPT‑5.2, Claude 4.5, Qwen 3.5, DeepSeek R1—serve as the Decision Logic. They don’t just write code; they select the next best action from a constrained set of possibilities.

The Contextual State Graph (The Memory)

Persistent state graphs track terminal outputs, failed tests, and architectural constraints across sessions lasting days.

The Toolset (The Hands)

A bounded capability set—Git, Shell, Browser, Compiler. While tools like Maven and shell scripts are ancient, the agent’s power depends on how effectively it wields them.

The Sandbox (The Environment)

An ephemeral, air‑gapped container where work actually happens—and where security is enforced.

Managerial Insight: Competitive advantage is determined by tool fidelity and the quality of context supplied to the state graph. Bigger context windows don’t guarantee better results; curation matters as much as quantity.

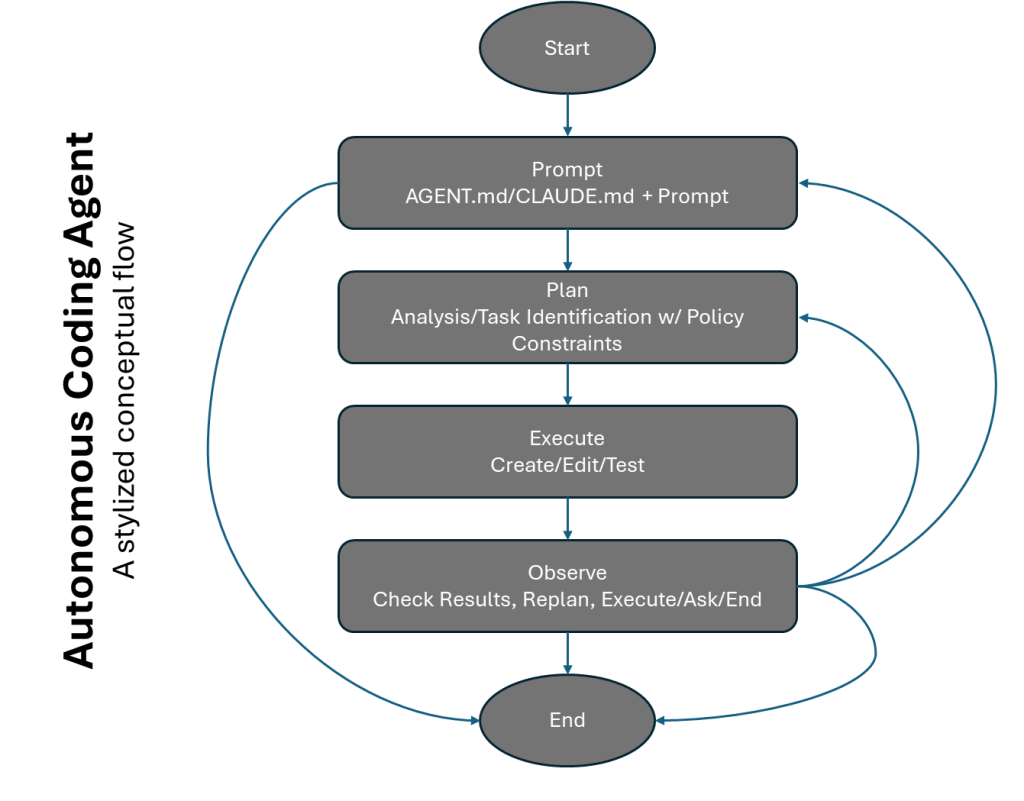

The Agentic Loop: Act → Observe → Correct

- Junior Agent: Typically fails after ~3 cycles and may hallucinate success.

- Senior Agentic System: Sustains 50+ cycles, autonomously researching APIs and iterating until tests pass.

Governance: The “Service Account” Model

To prevent destructive behavior (e.g., deleting production), apply the Service Account Principle:

- Identity Agents must have dedicated Git identities and Identity & Access Management (IAM) permissions. Never use a developer’s credentials.

- Tool‑Level Role-Based Access Control (RBAC) Restrict the tools, not the prompts. If the agent lacks push‑to‑main rights, it cannot perform the action.

- Human‑in‑the‑Loop High‑risk actions—deploys, billing changes—must require explicit human acknowledgment.

Groundwork Before an “AI Transformation” – agentic or otherwise

| If your repo has… | The Agent will… |

| 0% Test Coverage | Hallucinate working code and create massive technical debt. |

| Flaky CI/CD | Get stuck in “Loop Hell,” burning tokens and money on retries. |

| Spaghetti Architecture | Struggle to map the state, leading to “context drift.” |

| 90% Test Coverage | Perform like a Senior Engineer, self-correcting until green. |

Your job has evolved: You are now a Platform Engineer for Agents. Or the Chaperone. Make the codebase “Agent‑Readable” with clear types, fast tests, and deterministic builds.

Strategic Metrics for 2026

Lines of Code are vanity. In 2026, measure:

Cost per Successful Outcome

(Total Tokens + Compute) / (Merged PRs).

Containment Rate

% of tickets resolved by agents without human intervention.

Loop Efficiency

How many cycles does the agent take to find a solution? (Lower is better).

Review Overhead

Minutes humans spend reviewing Agent-PRs.

Conclusion: The Path Forward

The 2024 panic that “AI will replace coders” is behind us. In 2026, we know the truth: AI replaces the mason but empowers the architect.

We aren’t deploying magic. We are deploying Better‑Than‑Probabilistic Orchestration – try, measure, correct, loop until the exit condition is met.

Success requires shifting from a Prompt‑First mindset to a System‑First → Prompt‑Right → Tool‑Granular mindset. The tools may be old, but given the right granularity along with the prompt, tuned to the model’s quirks, remains the master key.